Level 1 — Absolute Beginner

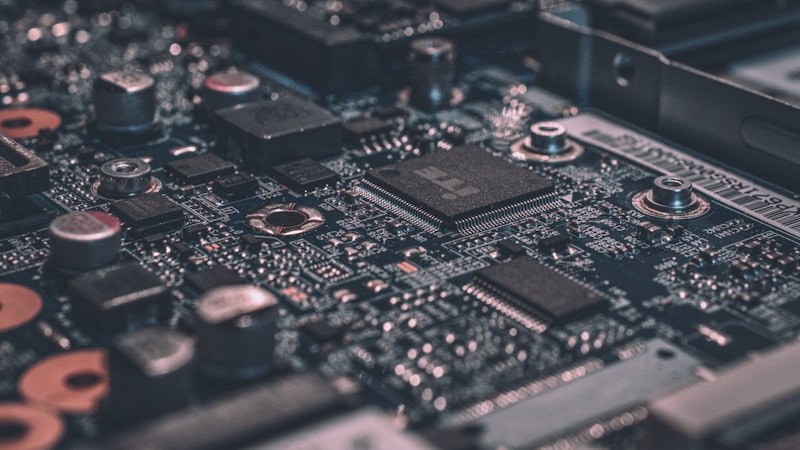

A company called Cerebras makes very big computer chips. These chips help computers learn and think. The company wants to sell parts of itself to people who want to invest money. This is called an IPO.

Cerebras wants to raise $3.5 billion. That is a very large amount of money. The company will sell 28 million shares. Each share will cost between $115 and $125. People can buy these shares on the Nasdaq stock market.

Cerebras competes with a bigger company called Nvidia. Nvidia also makes chips for artificial intelligence. But the Cerebras chip is special because it is very large — 56 times bigger than Nvidia's biggest chip. The big size helps it work faster.

- chip

- A small, flat piece of silicon inside a computer that processes information.

- IPO

- Initial Public Offering — when a company sells shares to the public for the first time.

- invest

- To put money into something, hoping to make more money later.

- shares

- Small parts of a company that people can buy and own.

- billion

- The number 1,000,000,000 — one thousand million.

- stock market

- A place where people buy and sell shares of companies.

- artificial intelligence

- Computer systems that can do tasks that normally need human thinking.

- compete

- To try to do better than someone else in the same area.

Level 2 — Elementary

Cerebras Systems is an American technology company that designs and builds computer chips for artificial intelligence. Unlike most chip companies, Cerebras makes chips that are extremely large. Their latest chip covers an entire silicon wafer, which is the round disc used to manufacture regular chips. This makes the Cerebras chip 56 times larger than Nvidia's biggest GPU.

The company recently filed paperwork to go public on the Nasdaq stock exchange. Going public means the company will sell shares to ordinary investors for the first time. This process is called an Initial Public Offering, or IPO. Cerebras plans to sell 28 million shares at a price between $115 and $125 per share.

If the IPO succeeds at the top of the price range, Cerebras could raise up to $3.5 billion. This would make it one of the largest technology IPOs in recent years. The money raised will help Cerebras grow its business and build more of its advanced chips to meet the huge demand for AI computing power.

Cerebras faces strong competition from Nvidia, which currently dominates the market for AI training chips. However, Cerebras believes its wafer-scale approach gives it an advantage. Because the chip is so large, it can process more data at once, which can make training AI models faster and more efficient.

- silicon wafer

- A thin, round disc of silicon used to make computer chips.

- GPU

- Graphics Processing Unit — a chip originally for graphics, now widely used for AI.

- stock exchange

- An organized market where shares of companies are bought and sold.

- investors

- People or organizations that put money into a company hoping to profit.

- filed paperwork

- Submitted official documents to a government agency.

- price range

- The lowest and highest prices being considered for something.

- dominates

- Has the most power or control in a particular area.

- wafer-scale

- Describes a chip that uses an entire silicon wafer instead of a small piece.

- efficient

- Working well without wasting time, energy, or resources.

- demand

- The desire or need for a product or service from many people.

Level 3 — Intermediate

Cerebras Systems, a Silicon Valley startup that has staked its future on building the world's largest computer chips, has filed updated paperwork with the U.S. Securities and Exchange Commission for an initial public offering on the Nasdaq stock exchange. The company plans to offer 28 million shares priced between $115 and $125 each, which could raise as much as $3.5 billion — making it one of the most ambitious technology IPOs in recent memory.

What sets Cerebras apart from its competitors is its radical approach to chip design. While conventional chips are cut from small sections of a silicon wafer, Cerebras uses the entire wafer as a single chip. The result is a processor that is 56 times larger than Nvidia's flagship H100 GPU. This enormous size allows the chip to hold more transistors, more memory, and more processing cores, all connected without the delays that come from linking separate chips together.

The timing of the IPO reflects the explosive growth in demand for AI computing hardware. Companies across every industry are racing to train large language models and other AI systems, and the chips that power this training have become some of the most sought-after technology products in the world. Nvidia has captured the lion's share of this market, but Cerebras argues that its wafer-scale engine offers superior performance for certain AI workloads, particularly those involving very large models.

However, the path to a successful IPO has not been entirely smooth. Earlier filings were delayed amid concerns about Cerebras's heavy reliance on a small number of customers, including a significant contract with a Middle Eastern sovereign wealth fund. Regulatory scrutiny of AI chip exports to certain regions added another layer of complexity. The updated filing appears to have addressed these concerns, clearing the way for the offering to proceed.

If the IPO succeeds, it will provide Cerebras with substantial capital to expand its manufacturing capacity and invest in next-generation chip designs. The outcome will also serve as a barometer for investor appetite in the AI hardware sector, which has seen valuations soar but also faces questions about long-term sustainability and the concentration of market power among a handful of dominant players.

- Securities and Exchange Commission

- The U.S. government agency that regulates stock markets and protects investors.

- flagship

- The best or most important product in a company's lineup.

- transistors

- Tiny electronic switches inside chips that process and store data.

- processing cores

- Individual units within a chip that carry out computing tasks.

- large language models

- AI systems trained on massive text data to understand and generate human language.

- sought-after

- In great demand; wanted by many people or companies.

Level 4 — Advanced

Cerebras Systems, the AI semiconductor startup renowned for its unconventional approach to processor design, has filed an amended S-1 registration statement with the U.S. Securities and Exchange Commission, setting the stage for what could become one of the most significant technology IPOs in years. The company intends to offer approximately 28 million Class A common shares at an anticipated price range of $115 to $125 per share, potentially raising as much as $3.5 billion and implying a fully diluted valuation in the vicinity of $14 billion.

At the heart of Cerebras's value proposition is its wafer-scale engine, a processor that defies the fundamental convention of semiconductor manufacturing. Standard practice dictates that individual chips — or dies — are cut from a silicon wafer, typically yielding hundreds of separate processors from a single 300-millimeter disc. Cerebras instead fabricates its entire processor on the full wafer, producing a monolithic chip containing 2.6 trillion transistors, 900,000 AI-optimized computing cores, and 44 gigabytes of on-chip memory. The result is a device approximately 56 times the physical area of Nvidia's H100 GPU, with commensurately greater bandwidth and computational throughput.

The strategic rationale for this approach centers on the elimination of inter-chip communication bottlenecks. In conventional multi-GPU systems used for training large AI models, data must be transferred between separate chips via high-speed interconnects — a process that introduces latency and consumes substantial power. By consolidating all processing onto a single wafer, Cerebras claims to achieve dramatically lower latency and higher data throughput for workloads such as training frontier large language models, where the sheer volume of parameters can exceed one trillion.

Despite the compelling technical narrative, Cerebras's path to its IPO has been fraught with complications. The company's initial filing in September 2024 was followed by an extended quiet period, during which concerns surfaced regarding its customer concentration — reportedly deriving a substantial proportion of revenue from a single contract with G42, an Abu Dhabi-based technology conglomerate backed by a sovereign wealth fund. Furthermore, evolving U.S. export controls on advanced AI chips destined for Gulf states and other geopolitically sensitive regions introduced uncertainty about the durability of such revenue streams.

The amended filing appears to have resolved or mitigated these concerns sufficiently to proceed. Cerebras has reportedly diversified its customer pipeline and provided additional disclosures regarding its export compliance framework. The company has also highlighted its partnership with leading cloud service providers and its growing traction with enterprise customers seeking alternatives to Nvidia's dominant ecosystem. Analysts note that the IPO will test whether institutional investors are prepared to pay a premium for a pure-play AI chip challenger in a market where Nvidia commands an estimated 80 percent share.